How should the infrastructure and application for utilizing AI, which continues to evolve at a rapid pace, be developed?

Here are the initiatives taken by NTT DATA INTELLILINK.

What is Artificial Intelligence (AI)?How should we see "artificial intelligence (AI)" in the first place?

One of the technologies receiving most attention today is Artificial Intelligence (AI). AI is generally understood as "the artificial reproduction of various perceptions and intelligence realized by humans".

However, in practice, there is no uniquely fixed definition for AI. It is an area that continues to be discussed from various perspectives, including computer science, cognitive science, medicine, psychology, and even philosophy.

Deep learning significantly improves the accuracy of AI

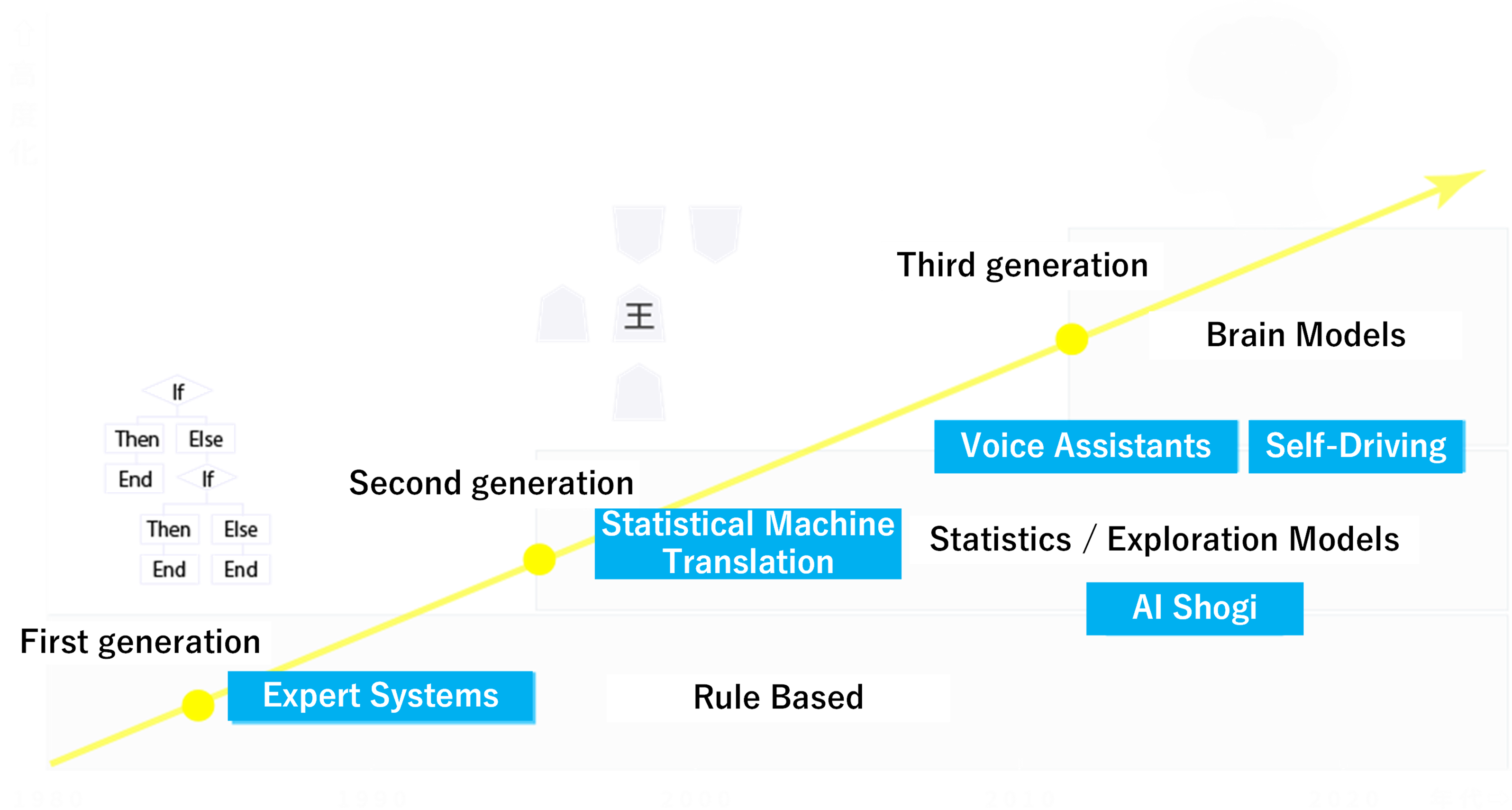

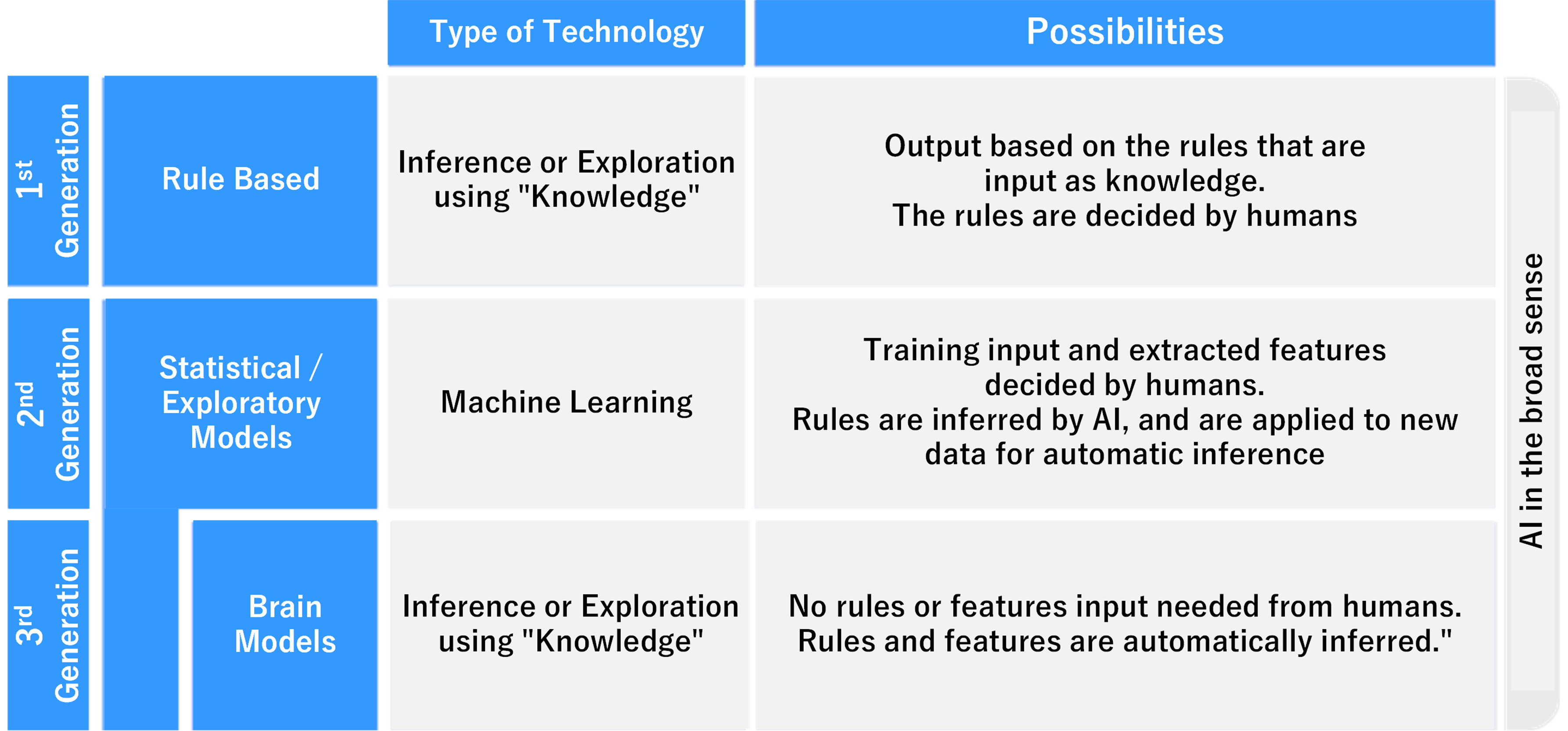

AI is shifting from the "first generation", which supports human intellectual tasks by developing expert's rules of thumb, through the "second generation", which finds optimal solution through statistical/search models, to the "third generation", which dramatically improves recognition performance based on brain models.

The AI has rapidly gained attention in recent years just because of the emergence of this third-generation technology. A method called "deep learning", which is an algorithm based on human brain neural circuits, is a typical example of third-generation technology. As in conventional machine learning, without the need for a data scientist to design features(*), the computer itself reads through large amount of data and discovers features such as rules and correlations hidden in the data.

By inductive reasoning just as humans do, deep learning can also autonomously uncover "meaning" and "concepts".

In addition, it continues to learn and refine the intelligence afterwards also.

Deep learning has greatly improved the accuracy of AI.

*Information that is useful for identifying and predicting objects and events, and is selected from data items or set by combining multiple data items.

Used in various cross-generational AI methods in the right place at the right time

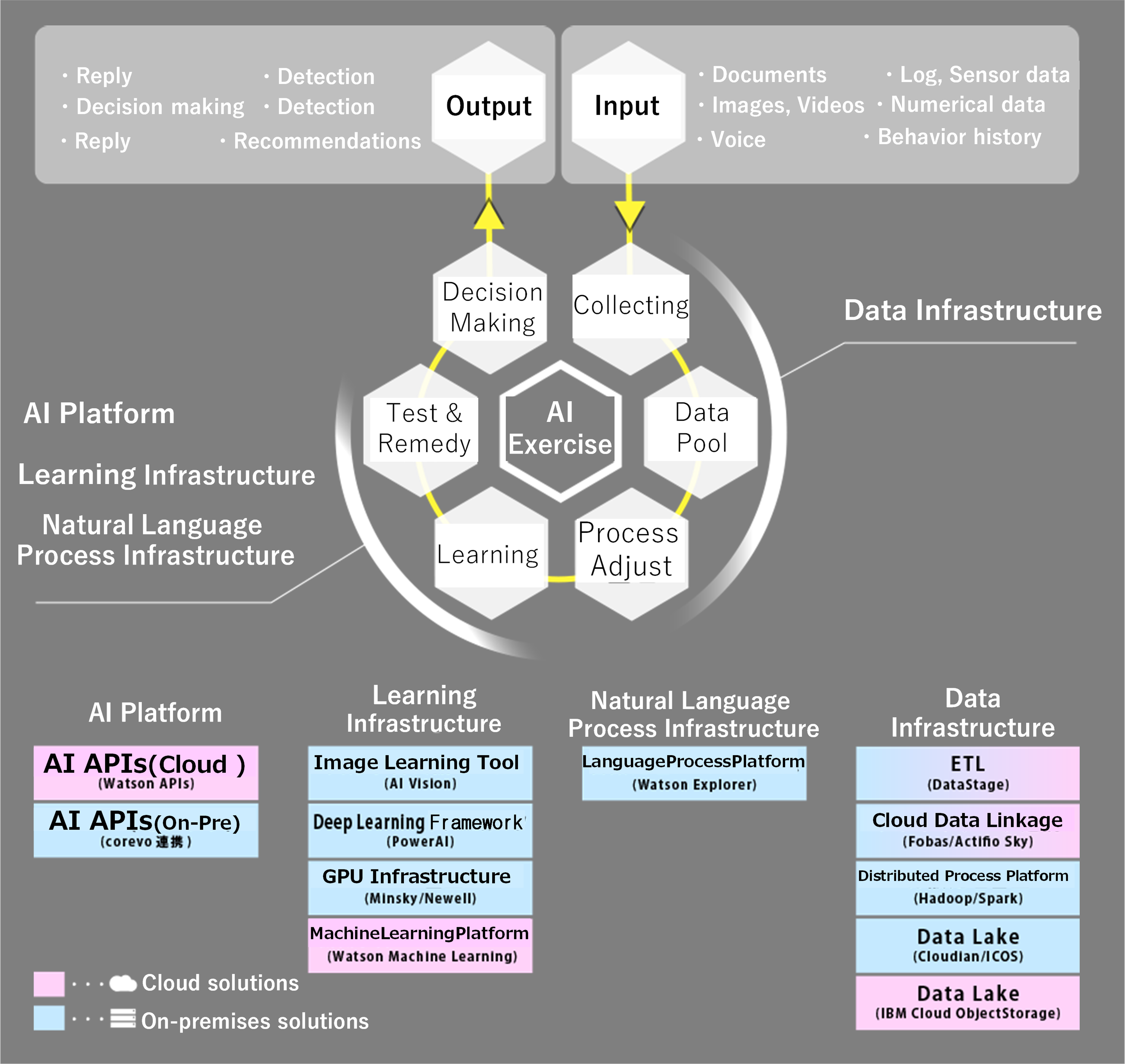

In this way, the businesses will deal with and use AI in the future to design solutions for various business issues by combining first- to third-generation methods in the right place and seamlessly integrating them with mobile, wearable, IoT, and analytics (statistical analysis) systems.

Generally speaking, AI is used in the three areas given below.

-

ImageUnderstand the meaning of

images and

sort and

organize them -

Natural LanguageInterprets

the intent of

spoken words and text -

Numerical ValuesReads numerical patterns

to predict and detect

Key Points of AI Application in Business Scenarios

When considering the introduction of AI, the focus tends to be on the learning part, but in order to actually utilize AI effectively and continuously, it is important to be able to properly turn the lifecycle of AI.

We utilize the knowledge and expertise that we have gained during system infrastructure construction and application development to provide comprehensive services ranging from the construction of data lakes for storing learning data to the construction of Deep Learning platforms, AI application development, and technical support for AI implementation.

(*We have a lot of experience in bilingual projects, please contact us for details)

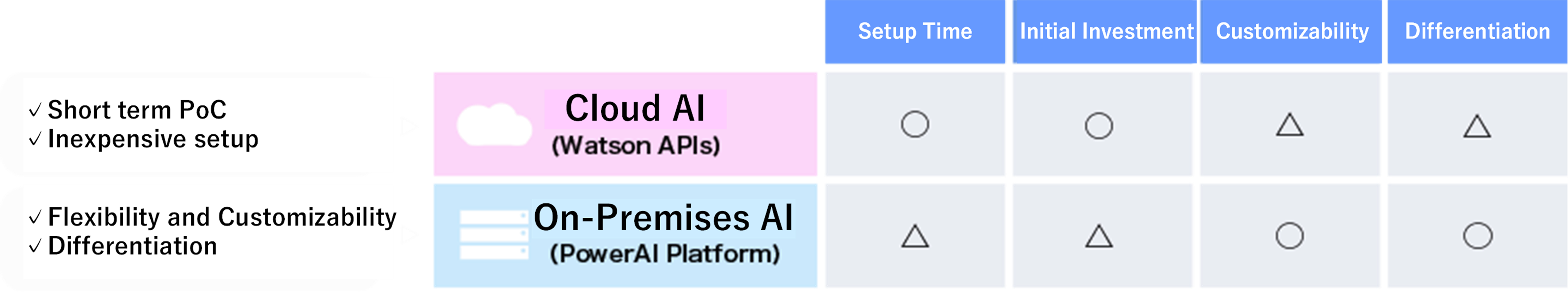

Cloud AI vs. On-Premise AI